Building AI tutors that actually work 🧠

Why most AI learning systems fail — and what they’re missing

Parsnip is building a new kind of AI learning system: one that models skills, progress, and practice explicitly, and understands learners the way great teachers do. We’re looking for a partnership where we’ll work hands-on to deploy it in a novel domain.

Any technology-based learning approach — whether it’s built for students, employees, customers, or the general public — runs into the same wall 🧱.

You can produce fantastic content. You can add assessments, dashboards, AI-generated explanations, even live AI avatars. And yet, learning outcomes somehow plateau quickly.

This isn’t for lack of effort, but because learning technology still doesn’t understand the learner.

Over 40 years ago, educational psychologist Benjamin Bloom discovered the 2-sigma problem, where students working with a skilled 1:1 tutor outperformed those in traditional classrooms by two standard deviations. Technology-enabled personalized learning has promised us the potential to close that gap, at scale, for everyone. Why then, despite decades of progress, does that promise remain unfulfilled?

The answer is subtle: the core of effective tutoring isn’t conversation, presentation, or even feedback. It’s the teacher’s theory of mind about the learner.1 A great tutor or coach develops and continually updates a mental model of what someone knows, what they can do, how they’re progressing, and what they need to practice next.

Most learning technology, including today’s AI-driven systems, doesn’t build this model. Courses, content libraries, and even conversational agents treat learning as exposure to information, superficially personalized on the surface. Without an underlying model of skills and progression, “AI tutors” will remain fundamentally ineffective as teachers while being deceptively articulate and believable.

Until recently, this limitation was unavoidable. Explicitly modeling skills, structuring knowledge, and tracking learner progress all the way from theoretical knowledge to real-world practice required enormous manual effort. It only made sense in specific, standardized domains, if at all.

But, we believe this is how AI will truly, genuinely change the equation for education. It won’t be through generating more multiple-choice questions, better explanations, or more convincing avatars — but by making it finally feasible to systematically structure knowledge and model the process of learning itself.

With a structured map of knowledge, AI can become the “GPS”

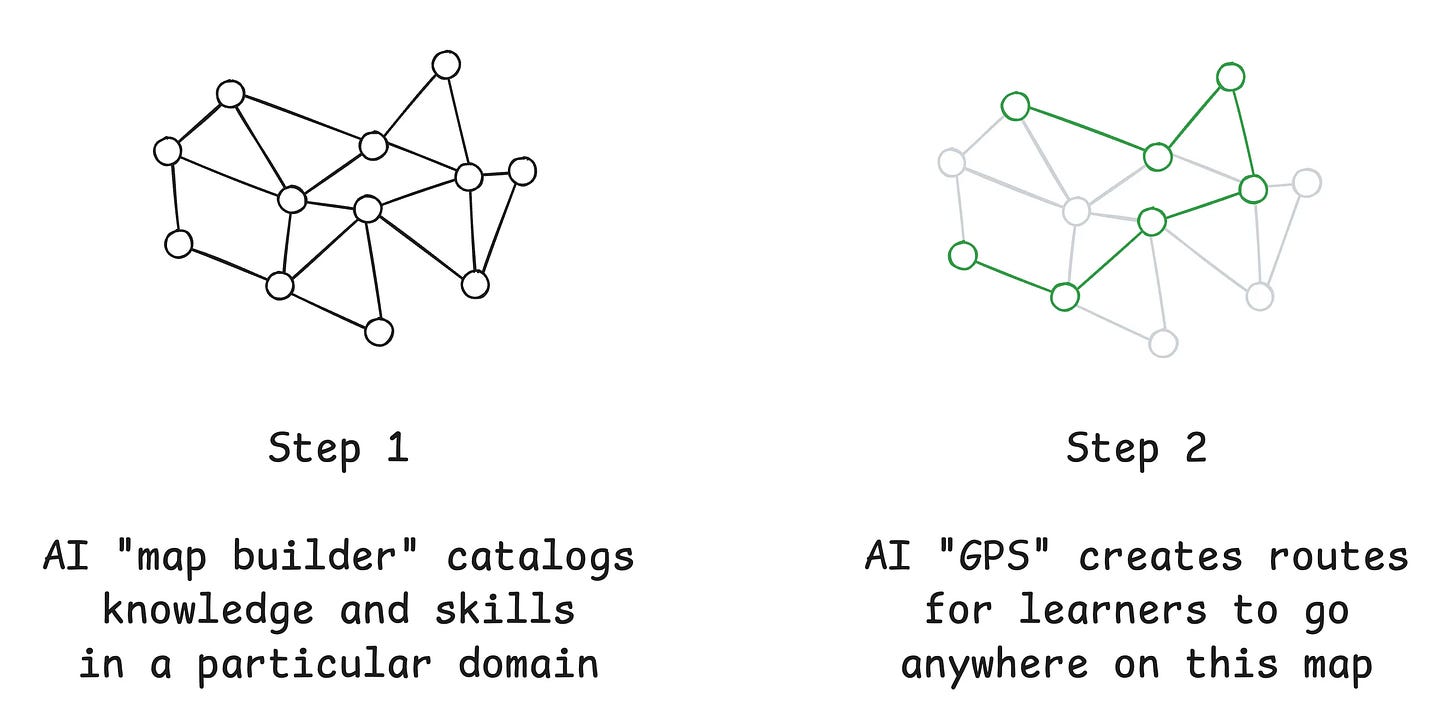

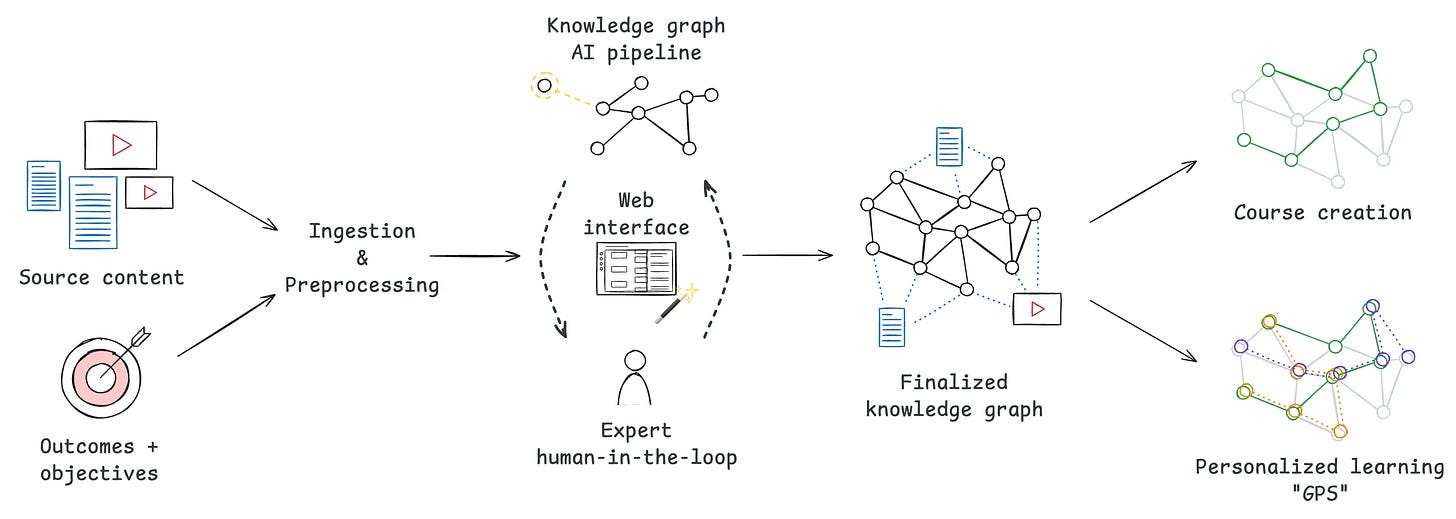

We’re building Parsnip Knowledge, an AI-native learning platform with two conceptual parts:

A map builder for knowledge and skills that learners can attain. This map creates structure from unstructured knowledge, enabling a theory of mind — a way for an AI system to model learners and make inferences about them.

A “GPS” system, with this map as a foundation, that becomes a personalized tutor/coach using multiple modalities: personalized content creation, assessments, and feedback loops that measure progress, modeling how human teachers understand a student’s knowledge and personalize their growth.

Building and maintaining these knowledge repositories and leveraging them for teaching has always been possible in theory, yet prohibitively labor intensive, making it worthwhile only for highly standardized, mass-market curricula. In domains without existing structure or schools, investing this effort would be hopelessly unrealistic. Yet, with AI systems to organize and assemble knowledge, it becomes possible to not only build these maps, but to create powerful “GPS” personal tutors that are especially impactful for domains where learning is messy, tacit, and practice-based.

How Parsnip Knowledge creates a theory of mind

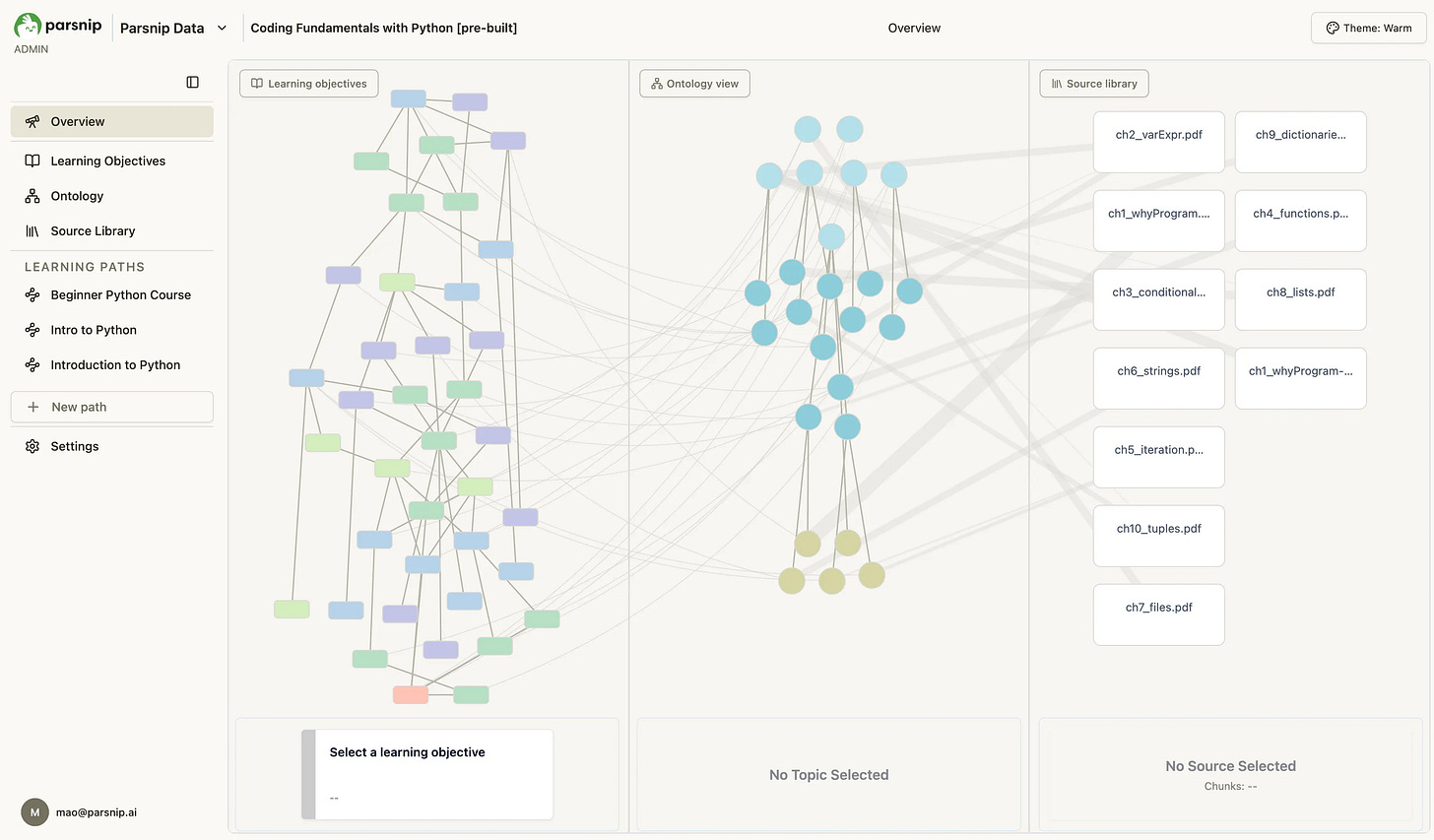

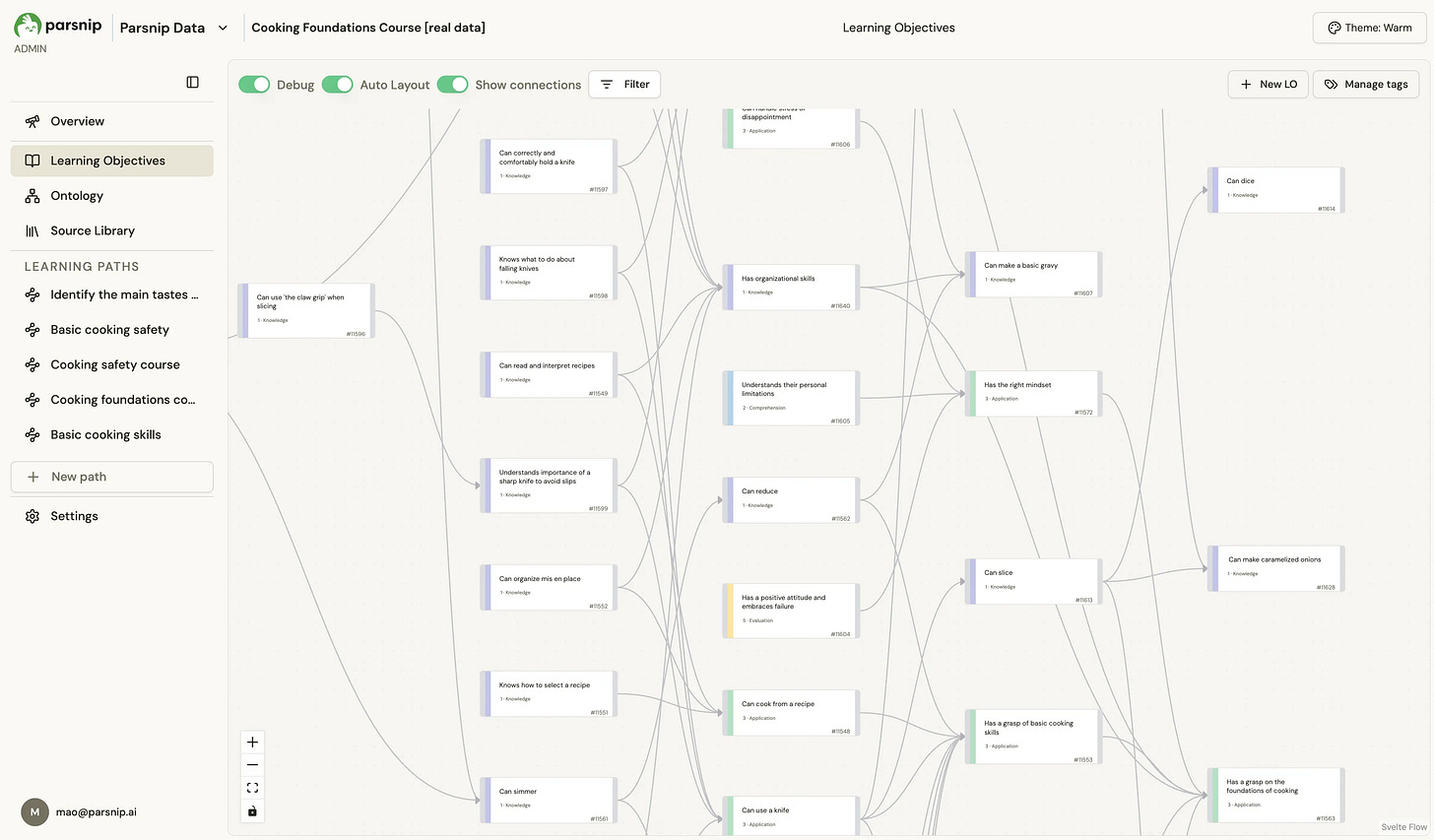

At its core, Parsnip Knowledge models learning as movement through a structured map of skills and concepts. These maps are represented as pedagogically-centered knowledge graphs (or “skill trees”) that define what can be learned and how concepts build on one another.

Each learner’s evolving position within this map is modeled explicitly, creating a continuously updated theory of mind: the same mental model great teachers have of what a learner knows, is able to do, and is ready to practice next. This system connects that structure to grounded, human-interpretable content, while AI pipelines assist in building, maintaining, and improving the graph wherever manual effort would otherwise be prohibitive. Through data visualizations, we make everything in the system visible and explicit:

Compared to typical AI-driven learning approaches built around LLMs, Parsnip Knowledge’s design differs in several key ways:

Interpretable by design: rather than working with a black box, subject-matter experts can inspect and verify both structure and content

Human-in-the-loop: AI-assisted creation, but guided by expert judgment and experience

Domain-agnostic: the same architecture supports programming, cooking, and beyond

Grounded generation: graph structure + trusted sources lead to predictable, deterministic content generation with minimal hallucination

With this foundation, previously unattainable capabilities become natural conclusions:

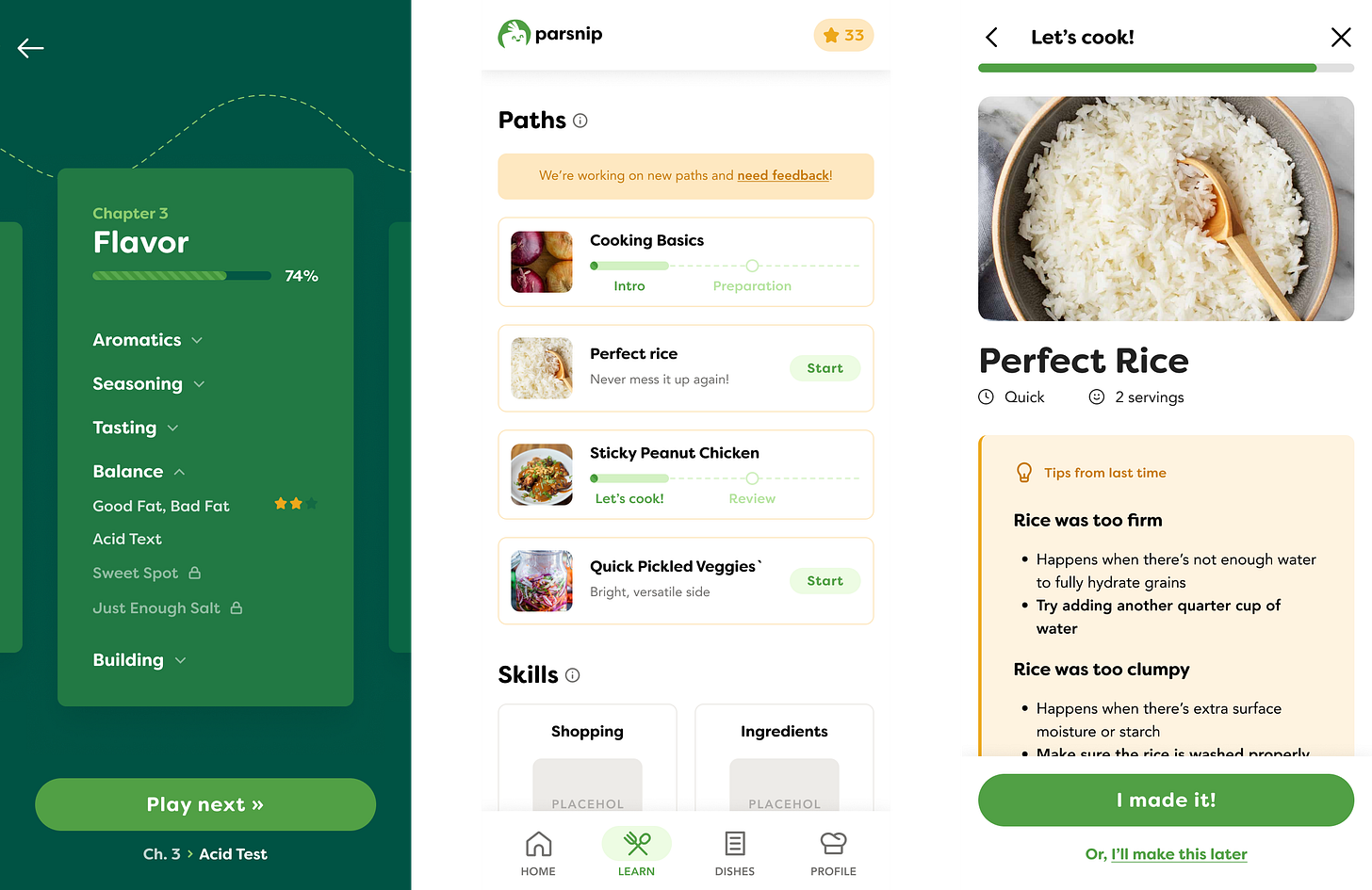

Skill-aware personalization: instruction and practice based on what a learner knows and can do

Explicit, measurable progress: concrete, quantifiable views of growth at both individual and cohort levels

Automated 1:1 tutoring & coaching: an AI “GPS” that guides learners through practice and feedback loops

Unlocking hard-to-structure domains: structured knowledge can be created even where content is fragmented, informal, or previously ineffective

Many learning systems share a common weakness: the inability to turn theoretical understanding into real-world practice. By modeling learning explicitly and continuously, Parsnip Knowledge enables tutors — both human and AI — to select the right practice at the right time, adapt instruction as learners progress, and support application in real contexts.

We believe the future of education is fundamentally collaborative: AI handles scale, structure, and adaptation, while humans provide judgment, context, and expertise. This system is designed to make both considerably more effective.

Moreover, we’ve already been stress-testing it at scale in a messy, real-world domain…

A case study: teaching millions of users how to cook

Crucially, the system we’ve described isn’t just a theory. It’s the foundation of a live product, Parsnip, a learn-to-cook app that’s been rated 4.9⭐ in the US App Store and already has over 100k downloads.

Parsnip pushes this learning system to its limits in a real end-user environment, and remains a core proving ground for the platform.

Cooking is a real-world domain with millions of potential users where learners and their learning goals are highly heterogeneous, turning knowledge into practice is tricky, and no standardized education or school currently exists. It’s also a fantastic laboratory that enables ongoing improvements for our AI and pedagogical effectiveness, through the constant real-world learning experiences of our users.

If this sounds familiar to you…

If you’re building a learning, coaching, or training product and have felt the limits of content-first or chatbot-driven approaches, this will likely feel familiar.

Parsnip Knowledge is designed for domains where outcomes matter, skills are developed through practice and judgment, and learners start from very different places. It’s especially well-suited to cases where knowledge is fragmented, informal, or expensive to structure — and where static courses struggle to translate into real-world capability.

We’re looking to work closely with one partner to apply this system deeply in a new domain, shaping both the product and its next stage of development. This is a hands-on collaboration: direct access to our founders, shared problem definition, and a focus on proving impact rather than shipping surface-level features.

If several of the following are true for your business, we’re likely to be a fit:

Learning outcomes matter beyond certification or completion

Skills are built through practice, judgment, or habit — not just information transfer

Learners vary widely in background, goals, or starting ability

Organizing and maintaining high-quality content requires collating myriad sources of unstructured knowledge, making it slow, manual, or costly

Personalization would materially change engagement or results

Some particularly suitable domains include management and leadership training, on-the-job skill development, and coaching-intensive professions. Other categories include life skills without effective training: relationships, emotional skills, parenting, health/fitness, and personal finance. These are just some examples, as these underlying challenges extend to any area where learning has resisted standardization.

Meet our founding team

If you’ve read this far, you likely already see how we can help solve the challenges you’re working on. We’re at a stage where Parsnip Knowledge is real, working, and ready to be pushed further in close collaboration with a thoughtful partner. If you’re exploring how to move beyond content-first or chatbot-driven learning, we’d be happy to talk — and delighted to give you a demo.

Please write to Andrew, co-founder/CEO, or connect on LinkedIn!

Andrew Mao: PhD in computer science @ Harvard; computer science + Wharton @ Penn; Postdoc @ Microsoft Research; ML scientist + PM at CTRL-labs (deeptech startup sold to Meta for ~$1B). In his research career, Andrew built a custom, real-time experimentation platform to conduct a prisoner’s dilemma experiment on the Internet that was >10 times longer than any extant experimental economics study.

Dan Sosa: PhD in AI/bioinformatics @ Stanford, computer science + molecular biology + Sloan @ MIT. Dan spent his PhD fine-tuning LLMs to build knowledge graphs from millions of biomedical research articles for drug discovery — the exact skillset for designing the AI pipelines and data structures that power Parsnip Knowledge.

In general: LLMs have word models, while experts have world models. For any given use case, the lack of a world model prevents AI from effective reasoning, decision making, and strategizing — and having a world model unlocks all those things. Here, we’re essentially building a world model for teaching and learning.

As a former middle school teacher, I'm so excited to see how personalized learning is unfolding. As an early-stage founder working on similar concepts in the news and opinion-formation space, this is an excellent technical writeup of things I've been noodling. Pumped to see you all exploring expanded opportunities for impact with what you've built.